An Intent First way to launch conversational experiences without boiling the ocean

Most organisations launch a chatbot the same way they launch a knowledge base. They try to cover everything.

They start with a spreadsheet, list fifty topics, and spend months building a catalogue of flows that look impressive but rarely get used. Adoption stalls, the assistant feels brittle, and the conclusion becomes: people do not like chat.

The failure is not chat. It is the launch strategy.

If you want conversational experiences to work, think in intent, not topics. You do not need fifty entry points. You need three Core Journeys that people actually care about, and you need them to work reliably.

That is the Core Journey Launch Strategy: do not build 50 topics. Start with 3 Core Journeys.

A Core Journey is a high value, high frequency intent that happens constantly, matters when it happens, and can be resolved cleanly when the system understands the intent. These are the journeys where a conversational experience should deliver an outcome, not just direction.

This approach is tool agnostic. Whether you are using ServiceNow Virtual Agent, Microsoft Copilot Studio, Google Dialogflow, Amazon Lex, an embedded assistant in your intranet, or another platform, the pattern holds. Start narrow, launch fast, learn aggressively.

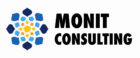

Core Journeys deliver outcomes in chat. Everything else is guided triage to the right destination.

Why three Core Journeys beat fifty topics

A topic list is a catalogue mindset. It asks: how do we cover the surface area of IT and HR?

An Intent First mindset is outcome driven. It asks: which journeys create the most friction, and how do we remove it?

When you start with three Core Journeys:

Quality beats coverage

In chat, just okay reads as dumb. People give you one chance. A small set of journeys lets you make them excellent.

You learn faster

A small launch produces real behavioural data quickly. You see what people ask, where they drop off, and what language they use.

You earn trust

Adoption does not come from marketing. It comes from being reliably helpful in a few moments that matter.

You avoid rebuilding the portal inside chat

If your first release is a menu of options, you have rebuilt the portal inside a chat window. Intent First means users speak naturally and the platform does the translation work.

The Core Journey method, step by step

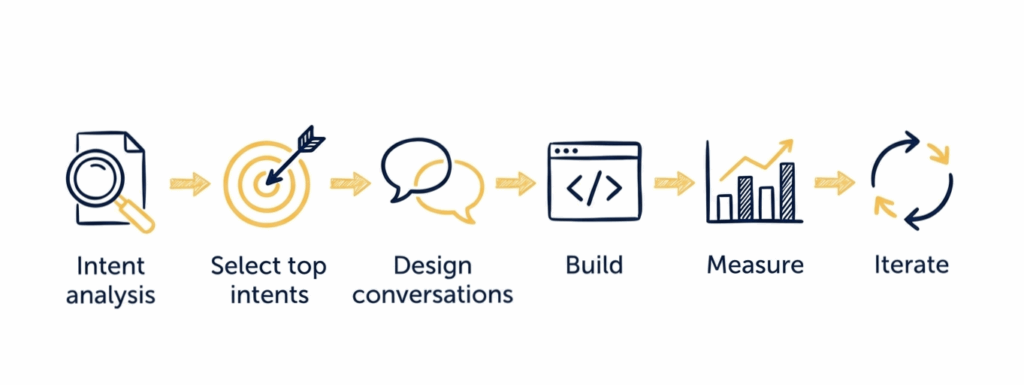

The loop is simple, because speed and feedback matter more than theoretical completeness.

Step 1: Intent analysis

Your first job is not designing conversations. It is understanding what people actually want.

Start with real demand signals:

• Top ticket categories and contact drivers

• Free text descriptions in incidents and requests

• Support channel messages such as email and Teams

• Repeat contacts and escalations

• The journeys users complain about as slow or confusing

You are looking for intents that are frequent, high friction, high confidence, and well bounded. High confidence means you can recognise them consistently. Well bounded means there is a clear done state and a predictable next action.

You will usually find that most volume clusters around a small number of themes such as access, password and authentication, connectivity, common software, device issues, and status checks.

Step 2: Select the top intents

Now choose your three Core Journeys. Be ruthless.

A good Core Journey has:

• A clear done state that the user recognises

• A small set of required data points

• A predictable next action such as automation, fulfilment, or a clean handoff

Common examples that often qualify:

• Reset password or unlock account

• Fix connectivity such as VPN or Wi Fi

• Request access to an application

• Track the status of a request

Now, be explicit about what happens for everything else, because this is where many teams either overbuild or underdesign.

For the other intents outside your Core Journeys, the goal is not a full conversation. The goal is guided triage. The assistant recognises what the user is trying to do and takes them to the right place, fast.

Sometimes that is a deep link to the correct request form. Sometimes it is the best knowledge article. Sometimes it is a clean handoff to a person, with a pre filled summary that captures the user’s intent and context. In those cases, success is correct navigation, not containment.

This is how you avoid building fifty conversations while still covering the long tail. Your assistant acts as a concierge, not a fulfilment agent, for non Core intents.

One more practical rule: do not pick three Core Journeys that all depend on the same fragile integration. Mix quick wins with one slightly harder journey if the value justifies it.

Step 3: Design conversations for humans, not forms

A strong Core Journey conversation does four things.

Start from natural language

Design entry phrases users will actually type:

• My account is locked

• VPN is not working

• I need access to X

• Where is my request

Even in platforms like ServiceNow Virtual Agent, Copilot Studio, Dialogflow, or Lex, where you map to topics, intents, and entities, the design goal stays the same. Recognise messy human phrasing and route to a structured outcome.

Ask only what you must

Every question is friction. If you can infer identity, device type, location, or request history, do not ask. Collect only what you need to take the next action.

Confirm you understood

A single confirmation line prevents spirals:

Sounds like you want to reset your password. Is that right?

Provide a clean off ramp

When confidence is low, do not trap the user. Offer one of three exits:

• navigation to the right form

• navigation to a targeted article

• handoff to a person with a clean summary

That off ramp is not a failure. It is part of the strategy. The assistant is still reducing translation effort, because the user does not have to guess categories, subcategories, or the right portal page.

Step 4: Build a thin slice, end to end

Build each Core Journey as a working thin slice:

• Recogniser, so the system detects the intent and captures key details

• Conversation, so you collect what is required

• Action, so something real happens, whether that is automation, fulfilment, routing, or knowledge

• Closure, so the user knows what happened and what will happen next

Do not build parts. Build outcomes. A half built assistant teaches users not to trust it.

Step 5: Measure the pilot properly

If you do not measure, you will default to opinions and add more topics. Instead, run a simple scoreboard that tells you whether users succeed and where they fail.

Keep it lightweight. You do not need perfect analytics to learn. You need a few signal metrics that align to your strategy: three Core Journeys plus reliable routing for everything else.

Pilot measurement, six metrics only

1- Sessions started, split by Core Journeys and non Core routing

2- Completion rate for Core Journeys

3- Handoff rate to a person

4- Drop off rate and the highest drop off step

5- Time to outcome for Core Journeys

6- Correct navigation rate for non Core intents

Also, watch the reversion rate (people start with the assistant, then immediately switch to a call or ticket). This is an early trust alarm.

Correct navigation rate can be measured through quick feedback, link click tracking, or short follow up prompts such as: Did that take you to the right place?

These metrics answer the only questions that matter in a pilot:

Are people using it

Are they succeeding

Where are they failing

Is routing helpful for the long tail

Step 6: Iterate with a short improvement loop

Many teams do not have capacity for weekly changes, and that is fine. The goal is not weekly. The goal is a short, consistent improvement loop.

During a pilot, weekly reviews are ideal if you can manage it, because the feedback is fresh and the fixes are usually small. But a fortnightly cadence is more realistic for many platform teams, and a monthly cadence can still work if you are disciplined.

The rule is simple: pick one improvement per cycle, based on evidence.

Each cycle:

• Review the single highest drop off step in each Core Journey

• Review the most common handoff reason

• Review the top three failed recognitions and add or refine training phrases

• Improve one thing, then measure again

A conversational launch should behave like a product: ship, learn, adjust.

The mindset shift that makes this work

The Core Journey strategy is a discipline. You win trust through a few high craft experiences, then expand based on evidence, not guesses.

Starting smaller is how you go faster.

Once your first three Core Journeys are stable, choose the next three using the same loop. Over time, the assistant becomes both a fulfilment channel for high value journeys and a concierge for everything else. That balance is what creates adoption.

A practical next step

If you want a structured way to choose your first three Core Journeys, map the long tail routing, and launch a pilot without overbuilding, you can explore the Intent First Virtual Agent Assessment.

It starts with a 2 week Intent Snapshot, where we will:

• Review demand signals across tickets, free text, and contact drivers

• Identify and prioritise your top three Core Journeys

• Define success measures and a lightweight pilot scoreboard

• Produce build ready conversation blueprints for each Core Journey, including off ramps and required data

• Create a non Core routing map so the assistant can guide people to the right form, article, or human support fast

If you would like to run an Intent Snapshot, message me and I will send the outline and prerequisites.

You do not win adoption by covering everything. You win it by making a few journeys feel effortless, then expanding from evidence.